In a research paper 1co-authored with David Looney to be presented at the International Conference on Acoustics, Speech and Signal Processing (ICASSP) in Seoul, South Korea on April 18, 2024, we answer the following question:

When does the acoustic environment provide helpful information for presentation attack detection (PAD)?

In this blog, we begin by exploring the concept of a presentation attack, its detection, and the factors that make it discernible. Armed with these fundamental insights, we proceed to summarize the key findings of our research.

What is a presentation attack?

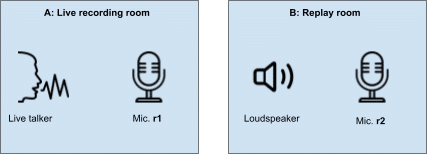

A presentation attack consists of a voice being captured by a recording device r1 in room A, then played back and captured by a second recording device r2 in room B, as shown in Fig. 1. We only distinguish between rooms A and B to facilitate our further analysis. In practice, A and B could be in the same room. It is further assumed that device r2 is used for automatic speaker verification (ASV), and the objective of the replay is to make the ASV in r2 believe that it is the live speaker talking.

Why can you detect a presentation attack?

There are four key factors that make it possible for live speech to be separated from replayed speech:

- Microphone characteristics (imperfections) of the recording device microphone

- Recorded speech format – sampling rate, compression, transmission artifacts

- Loudspeaker characteristics (imperfections) of the replay device

- Room acoustics of the recording room and the replay room

Does room acoustics help detection?

Now that we know what a presentation attack is and why it is possible to detect it, we look closer at the room acoustics component. Whenever speech is recorded at a distance from a microphone, it is affected by the room’s acoustics, which typically consist of noise and reverberation (due to reflections off walls and objects present in the room). In this part of the research, we focus on reverberation.

Interestingly, the problem of replay and reverberation has been studied quite extensively in the seemingly unrelated fields of music and speech reproduction. Indeed, a recent paper 2 on this topic was published in the Journal of the Acoustical Society of America and inspired our work.

One way to view the presentation attack vs live speech is that live speech undergoes the reverberation of one room. In contrast, the replayed speech passes through two reverberant settings (the recording room and the replay room). We exploit the fact that the reverberation of a room and a particular speaker-microphone configuration may be presented by its acoustic impulse response (AIR), showing the evolution of the reflected sound over time. In this way, we could study the separability of live versus replayed speech using properties of acoustic impulse responses that were completely independent of the speech signals.

From that point of view, both temporal and spectral properties of AIRs are derived theoretically from various established results in room acoustics. We showed that the spectral metric known as the spectral standard deviation (SSD) of the AIR provides the best separation of live and replayed speech. But only when there are ‘sufficient’ distances between the audio source and the microphone at the time of recording and playback. What is ‘sufficient’ depends on the size of the rooms and on the reverberation of these rooms; all of these parameters are captured in what is known in room acoustics as the critical distance.

These new theoretical insights deepen our understanding of room acoustics in PAD and pave the way for practical applications. For instance, we’ve developed a novel zero-shot convolutional neural network-based PAD approach that outperforms several baseline methods from the ASVspoof challenges. This approach is particularly powerful as it allows machine learning models to be trained without actual examples of replay attacks, demonstrating it when combined with others in PAD scenarios.

Conclusion

In conclusion, the role of room acoustics in PAD is not simple. It depends on various factors, such as the distances between the live talker and the microphone, the loudspeaker and the microphone in the replay, and the volumes and reverberation of the rooms. However, if all recording and playback occur above the so-called critical distance, reverberation alone can be a powerful tool for detecting a replay with high accuracy.

Most importantly, the combination of data-driven machine learning development with domain-specific theoretical insights allows us to develop deepfake and replay attack detection methods that push the boundaries of the state-of-the-art.

Learn more about our liveness detection technology here.

1. N. D. Gaubitch and D. Looney, “On the role of room acoustics in audio presentation attack detection,” in Proc. ICASSP, pp. 906-910, Apr. 2024

2. A. Haeussler and S. van de Par, “Crispness, speech intelligibility, and coloration of reverberant recordings played back in another reverberant room (Room-in-Room),” J. Acoust. Soc. Am., vol. 145, no. 2, pp. 931–942, Feb. 2019.