If you haven’t been tracking the news over the past week, the American Medical Association has put AI-driven physician impersonation squarely on the healthcare policy agenda.

In its April 2026 policy framework, the AMA called for formal protections against deepfake impersonation of physicians, warning that synthetic audio and video can mislead patients, influence clinical decisions, and erode trust in care delivery. This is not a narrow technical concern. It is a systemic one. When the largest physician organization in the United States elevates an issue to this level, it signals that something fundamental has shifted in how risk is understood.

What makes this moment more significant is that the AMA is not acting in isolation.

Over the past several months, a clear and consistent pattern has emerged across healthcare associations, federal agencies, and cybersecurity authorities:

- The American Hospital Association has issued guidance on AI-enabled scams targeting healthcare staff.

- CMS issued a Request for Information (RFI) to inform potential program-integrity measures, including those that shape how CMS and healthcare organizations identify and protect against fraud, waste, and abuse.

- The FBI has released alerts describing the use of synthetic voice impersonation in active attacks. HHS has highlighted helpdesk social engineering, often executed through phone-based impersonation, as a significant healthcare cyber risk.

- NIST has updated its identity framework to explicitly account for synthetic media and fraud-resistant authentication.

- Regulators, including the FTC, are increasing scrutiny on AI-driven impersonation and its implications for fraud and consumer protection.

What’s changed in the last six months

This is a consistent pattern in how identity risk is being defined:

- Healthcare associations are framing AI impersonation as an operational and patient safety issue

- Federal agencies are treating synthetic voice and deepfake attacks as active threat vectors

- Cybersecurity frameworks are evolving to require stronger, fraud-resistant identity controls

- Regulators are signaling that identity assurance is becoming a more concrete compliance expectation

Individually, each of these developments might be interpreted as incremental. Taken together, they represent a transition from awareness to expectation.

Healthcare is being pushed toward a stronger operating principle: identity must be verified before trust is granted.

Deepfakes are the most visible expression of this shift, but they are not the root problem. The deeper issue is that many healthcare systems, across many of their most critical workflows, still rely on identity signals that are increasingly unreliable against AI-driven impersonation.

Every day, high-risk decisions are made based on assumed identity. A physician calls regarding a patient. A member requests a change to their account. A provider verifies benefits. An employee contacts the IT helpdesk for access support. A recruiter holds a virtual interview with a nurse for an open role. In each of these scenarios, the system relies on signals that were never designed to withstand AI-driven impersonation.

For years, those signals were sufficient. Knowledge-based authentication, one-time passwords, caller ID, and agent judgment provided a workable balance between security and usability. These methods were built for a world in which impersonation required effort, coordination, and time. That constraint imposed a natural limit on attack scale.

That constraint is eroding quickly.

AI has fundamentally changed the economics of impersonation. Attackers can now operate at machine speed, leveraging breached data to pass knowledge-based authentication at scale, intercepting or socially engineering one-time passwords in real time, spoofing phone numbers and device signals, and generating synthetic voices that can be difficult for humans to distinguish from legitimate callers.

What is most important about this shift is not just the introduction of new attack techniques. It is the exposure of a deeper vulnerability: the controls that healthcare relies on were not designed for this environment.

The controls haven’t kept up

The current model of identity verification in healthcare is under increasing strain, and in many cases, it is already failing.

Caller ID and ANI, once considered useful signals, are now widely understood to be spoofable. Even human judgment, long relied upon as a final safeguard, is no longer reliable when synthetic voices can convincingly replicate tone, cadence, and intent.

In practice, the effectiveness of these controls has eroded significantly, according to the 2025 Voice Intelligence and Security Report:

- Knowledge-based authentication is bypassed more than 50% of the time

- One-time passwords are bypassed in roughly 25% of attacks

At that level of failure, these are not isolated weaknesses. They are systemic.

In response to these shortcomings, guidance from NIST has moved away from treating knowledge-based authentication as a strong standalone identity control method. Fraud and consumer protection agencies continue to highlight the weaknesses of one-time passwords, particularly in the face of social engineering and real-time interception attacks.

It takes time to adopt new guidance, but attackers are not waiting.

Attackers are no longer attempting to break authentication controls. They are designing their operations around them, using predictable verification steps as part of the attack path itself.

This is already happening

What makes this shift urgent is that it is not theoretical. It is already playing out in real environments at scale.

In a large healthcare financial services environment, attackers used automated voice bots to probe IVR systems, extract account information, and escalate to live agents. Over a period of weeks, this activity resulted in 4,500 unique calls and $18M in exposed account value before the pattern was identified and contained.1

In another organization, enabling deepfake detection surfaced more than 50 suspected synthetic workers linked to DPRK operations, individuals who had successfully navigated hiring and onboarding processes using AI-generated identities. These were not external attackers attempting to breach the perimeter; they were embedded within the organization, operating under assumed identities that had been accepted as legitimate.1

In a provider environment, a deepfake-enabled social engineering attack can originate in the patient call center, be routed to the IT helpdesk, and result in unauthorized credential reset and downstream system compromise.

These examples are different in execution, but consistent in structure. They do not begin with technical exploitation of systems or infrastructure. They begin with identity being assumed, accepted, and trusted. And the financial and operational consequences are significant.

What this means going forward

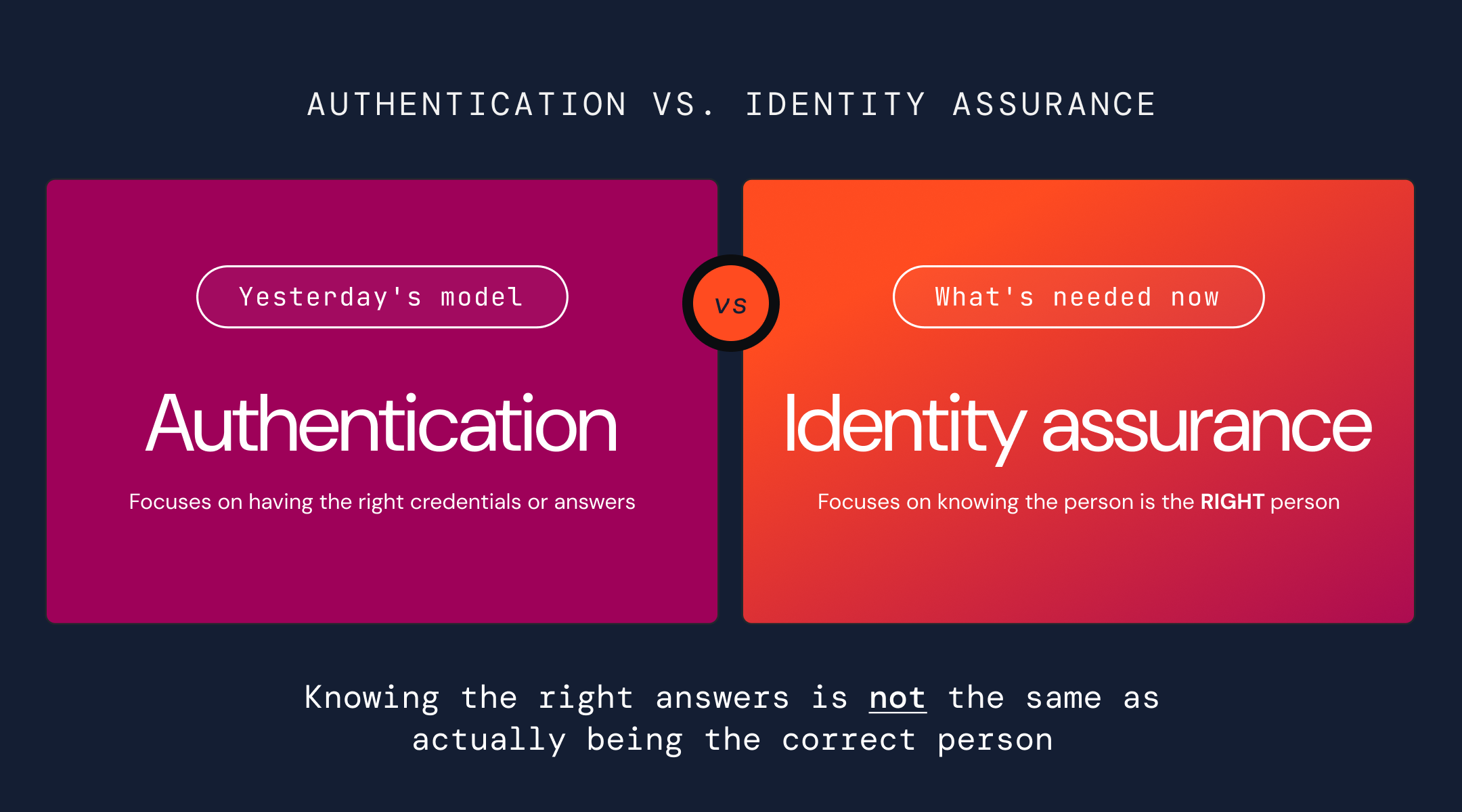

The shift underway in healthcare is not simply a matter of strengthening existing controls. It represents a fundamental transition from authentication to identity assurance, reinforced by emerging policy, regulatory and protection expectations.

Authentication focuses on whether a user can provide the correct credentials or responses. Identity assurance focuses on whether it’s the correct person giving the answers. Knowing the right answers is not the same as actually being the correct person.