The number every security leader should sit with

AI attacks increased 1,210% in 2025. Across 12 months. In our own data.

This isn’t a trend. It’s a structural shift. Attackers didn’t just add AI to their toolkit — they rebuilt their operations around it. Cheaper to run. Harder to detect. Startlingly scalable. Today’s attackers don’t get tired, don’t act on emotion, and don’t reuse the same face or voice twice. They train models, and those models work non-stop.

The attack vectors are everywhere. In contact centers, bots hit IVR systems not to drain funds immediately — but to learn. They probe for weak points, validate breached data, and come back smarter. In video meetings, a synthetic CFO requests an urgent wire transfer and the employee sees no reason for alarm. In healthcare, one major provider uncovered over 15,000 unique bot fraud calls in a single summer.

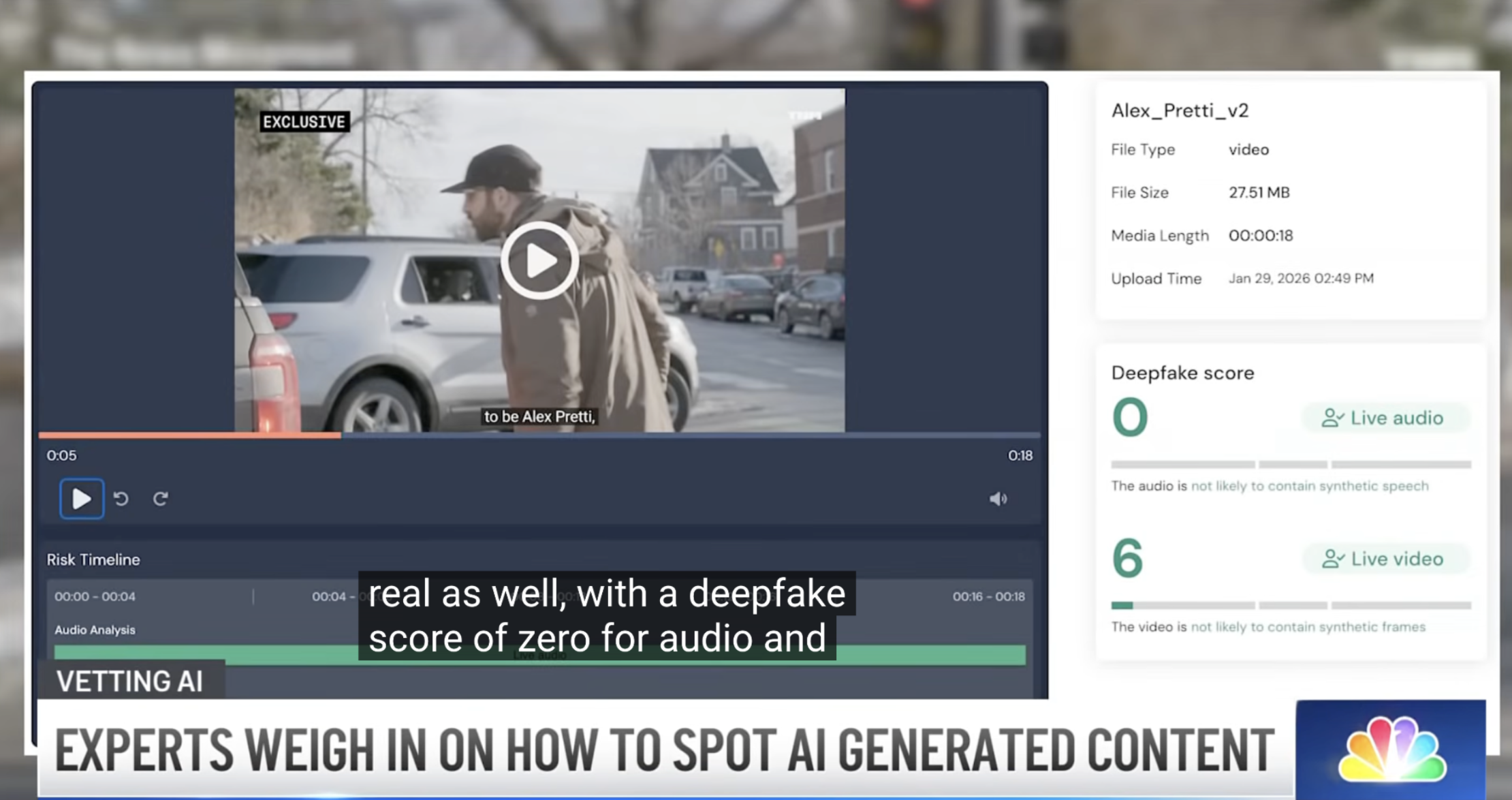

Meanwhile, humans catch AI-generated content only about 50% of the time. Awareness isn’t the fix. The data makes that clear.

Traditional attacks — social engineering, phishing, vishing — are still rising. But they’re being outpaced 6x by AI-backed schemes. More fraudsters are making the switch because it works.

In 2025, AI became a magnifying force for fraud, growing faster than both non-AI fraud and the defenses built to stop it.

What this means if you’re a CISO

Identity is the new perimeter. Attackers aren’t breaking through walls anymore — they’re walking in the front door by convincingly pretending to be someone else. And AI has made that impersonation cheap, scalable, and disturbingly convincing.

The old authentication model assumed that if someone knew the right things — a password, a security question, a one-time code — they were who they claimed to be. That assumption is broken. Knowledge-based authentication wasn’t built for a world where synthetic voices bypass IVR systems, deepfaked executives approve wire transfers, and 25% of candidate profiles could be fake by 2028.

The shift security leaders need to make: stop thinking about authentication as a one-time credential validation and start thinking about it as real-world identity assurance. In the AI era, that means answering three questions — in this order — for every interaction.

Is it a machine?

Before anything else, you need to know if you’re dealing with a human or a bot. AI-powered bots don’t probe once and move on — they learn your systems, validate breached data, and return smarter.

Does it have the right intent?

A real human isn’t automatically a safe one. Social engineering, insider threats, and account takeover all involve legitimate-looking actors with malicious goals. Intent signals matter.

Is it who they claim to be?

Only once you’ve cleared the first two questions does traditional identity verification even apply. And even then, “the right credentials” isn’t enough anymore — you need to verify that a real, present human is behind the interaction.

The organizations that adapt won’t be the ones that detect the most deepfakes. They’ll be the ones that rebuild how they verify identity — systematically, across every surface where it can be faked.

This recognition belongs to the customers who trusted us before this problem had a name. We’re not done. Not even close.