Last Friday, GitLab’s Threat Intelligence Team published a report on North Korean tradecraft, or how DPRK-aligned threat actors use “Contagious Interview” and fake IT worker scams to spread malware and generate revenue for the sanctioned nation. The report offers one of the clearest public views into North Korean IT worker operations: structured revenue tracking, synthetic identity pipelines, VPN infrastructure, and facilitator-based laptop hosting.

Article

Extending GitLab’s Findings: What Hiring Telemetry from Pulse for Meetings Reveals

Sarosh Shahbuddin

Senior Director, Product Management • February 25, 2026 (UPDATED ON February 25, 2026)

Katelyn Halbert, Senior Talent Partner

8 minutes read time

Their case study findings show:

- Financial records from an IT worker cell: Fake personas generated $1M+ in revenue from 2022 to 2025

- Mass creation of synthetic identities: 100+ fake personas with professional connections and networks that seem real

- One worker with 21 unique personas: A web of fake identities going back to one nation-state actor

- Operating from Russia: One North Korean IT worker worked for U.S. organizations while located in Moscow, Russia

These are elaborate and strategic attacks designed to exploit a new entry point for enterprises.

After reviewing their findings, we examined confirmed fraudulent applicants across a number of engineering roles and compared those cases against broader suspicious activity observed in our hiring telemetry.

The goal here is to document measurable, repeatable patterns, demonstrating that it is no longer sufficient to rely solely on deepfake detection.

Pindrop Research: Fraud runs rampant in job applicant pools

GitLab’s research mirrors what Pindrop found in our own hiring pipeline, which included DPRK-affiliated applicants. Our analysis showed:

- 1 in 6 applicants exhibited clear signs of fraud

- 1 in 343 applicants were linked to North Korea

- 1 in 4 North Korean applicants used a deepfake during a live interview

Device telemetry

Across all confirmed fraudulent applicants who booked an interview, operating system distribution differed materially from baseline applicant traffic. In legitimate applicant traffic, modern operating systems dominate. Here, not a single confirmed case originated from a current-generation OS.

| Operating System | Confirmed Fraud Cases (%) |

|---|---|

| Windows 10 (2015) | 59% |

| macOS 10.15 Catalina (2019) | 31% |

| Linux | 9% |

| Windows 11 (2021) | 0% |

| macOS 13+ | 0% |

The complete absence of modern systems across confirmed cases is consistent with centralized device pools, reused hardware, or virtualized environments rather than independently maintained personal machines.

Geography & network characteristics

Network signals tell a similar story. Most confirmed fraudulent sessions appeared to be domestic by IP address.

| IP Characteristic | Percentage |

|---|---|

| VPN usage detected | 29.6% |

| Non-U.S. IP origin | 41% |

| U.S. IP origin | 59% |

GitLab documented facilitator-based laptop hosting and in-country device access. This model may help explain why 59% of confirmed fraudulent sessions in our dataset originated from U.S. IP addresses. If a device is physically located in the U.S. but remotely operated, however, IP geolocation reflects the device’s location, not necessarily the operator’s.

At the same time, 41% of confirmed fraudulent applicants connected from non-U.S. IP addresses. We predominantly hire within the United States, and interviews that originate outside the U.S. are flagged within Pindrop Pulse for Meetings for additional review.

VPN usage presents a different question. Approximately 29.6% of confirmed fraudulent applicants were using VPNs. In a subsequent review, we will compare VPN usage rates against non-fraudulent interview cohorts to determine whether the delta is statistically meaningful. We do not automatically escalate risk based solely on VPN presence since many legitimate candidates use commercial VPN services.

However, certain VPN services do trigger elevated scrutiny, particularly when the associated IP address or ASN matches known indicators of compromise (e.g., public IOC lists such as those maintained by Mandiant).

If we group confirmed fraud cases by geography, we see:

| Country | Percentage |

|---|---|

| United States | 59% |

| South / Central Asia | 33% |

| Sub-Saharan Africa | 4% |

| Russia | 4% |

GitLab’s report includes geography in a different form than our dataset. Their write-up ties specific clusters to assessed operating locations (for example, activities they associated with Moscow and Beijing) and separately documents facilitator recruitment in the U.S. and U.K. to host laptops for remote access. There is directional overlap: we see a non-trivial share of confirmed cases originating from U.S. IP space alongside a smaller cluster from Russia.

Ultimately, geography remains a valid control when candidates drift from expected hiring regions, regardless of whether they’re tied to DPRK activity.

Email patterns

GitLab also revealed, “Threat actors created accounts using Gmail email addresses in almost 90% of cases. We observed custom email domains in only five cases, all relating to organizations we assess are likely front companies controlled by North Korean threat actors.”

In the appendix of GitLab’s report, we noticed developer-themed email address patterns appearing repeatedly in the account list associated with their observed activity. Within that dataset:

| Email Pattern | Count (GitLab) |

|---|---|

| Contains “dev” | 26 |

| Contains “work” | 10 |

| Contains “code” | 1 |

After reviewing that breakdown, we applied the same lens to our own suspicious cohort (1,433 flagged resumes).

Within that group:

| Username Pattern | Count (Our Data) |

|---|---|

| Contains “dev” | 137 |

| Contains “work” | 40 |

| Contains “code” | 13 |

| Contains “tech” | 27 |

GitLab’s appendix shows repeated keyword patterns across attributed accounts (e.g. [email protected]). In our dataset, those same keywords appear at materially higher frequency within a suspicious cohort. GitLab also noted that roughly 90% of accounts tied to their observed activity used Gmail email addresses. This is exactly the same distribution we saw in our suspicious cohort.

Synthetic identity construction

One of my favorite callouts from the GitLab research is the identity fabrication workflow they discovered (summarized below).

| Stage | Technique |

|---|---|

| Image Collection | Scraping photos from social media and AI image generators |

| Face Manipulation | Using faceswapper.ai to generate identity-document-style headshots |

| Document Creation | Generating passports via VerifTools |

| Watermark Removal | Automated Photoshop (.atn) routine to remove VerifTools watermarks |

| Account Creation | Creating email and professional networking accounts |

The primary detail that stood out was that the fabricated passports were used operationally. GitLab documented that fake passports were used to obtain enhanced identity verification on professional networking platforms. We’ve noticed this in at least two cases where the candidate matched at least one other indicator for a North Korean IT worker (e.g., IP address, email address, or phone number).

This was the first time we’ve seen deepfakes pass the document verification process.

From one suspicious node to a cluster

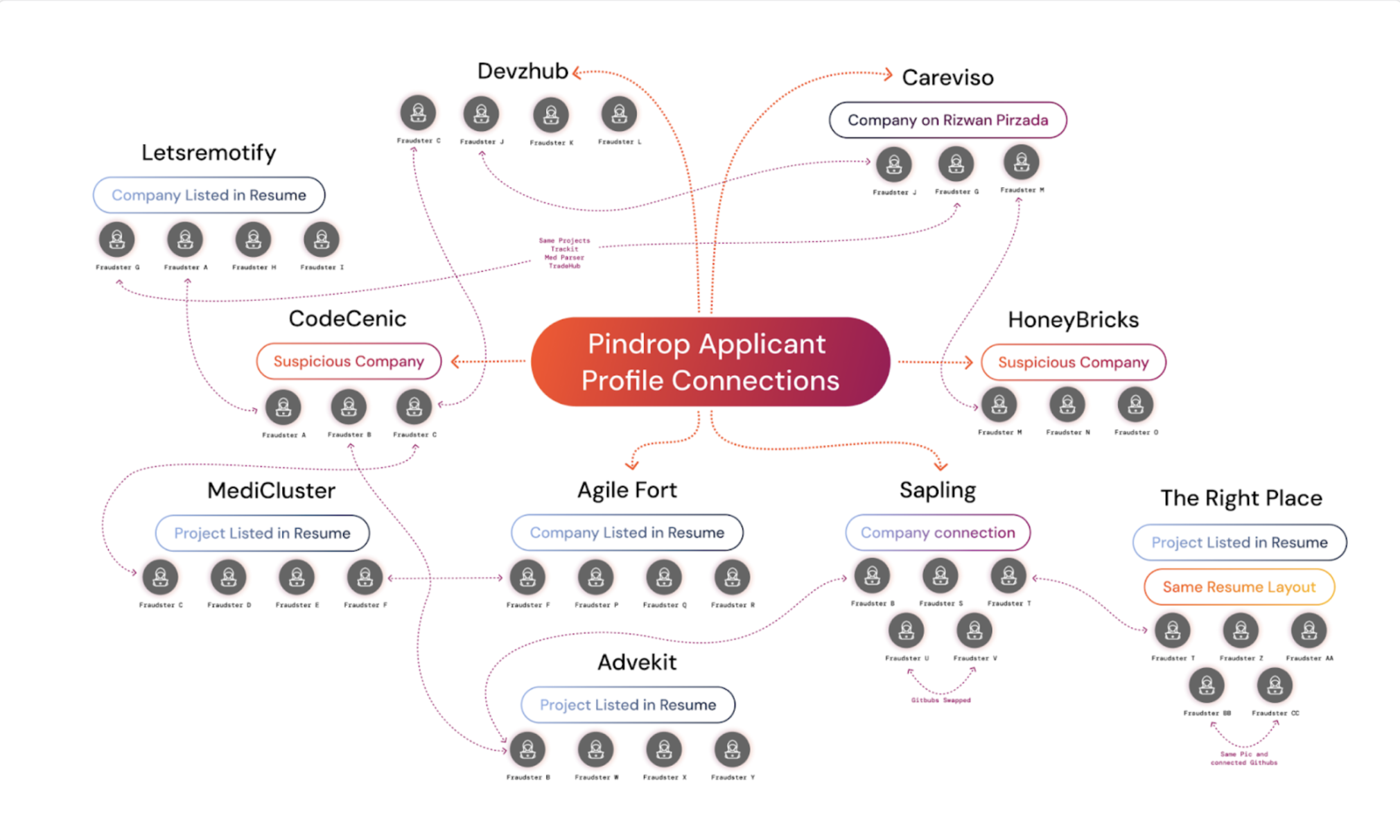

Once an applicant is confirmed fraudulent, we extract available attributes, including emails, phone numbers, resume templates, GitHub repositories, project descriptions, profile images, and build a relational graph across historical applicants.

Image: Pindrop research exposes an interconnected web of suspected shell companies and fraudulent job applicants.

In one instance, pivoting from a single confirmed case surfaced 23 additional suspicious applicants dating back to 2023. The overlaps were structural:

| Attribute Type | Observed Pattern |

|---|---|

| Resumes | Identical resumes with different names |

| Employers | Obscure companies appearing repeatedly |

| GitHub | Reused or swapped repositories |

| Contact Data | Overlapping phone numbers or email structures |

The connections were not obvious during resume review. They emerged only after graph expansion. A confirmed identity becomes a pivot node. Shared artifacts define the cluster boundary.

Beyond deepfakes: What hiring telemetry actually shows

GitLab documented the structured backend of persona cultivation. Hiring telemetry exposes the operational artifacts that support that structure.

Individually, the signals are subtle. Correlated across device, network, resume artifacts, and cluster expansion, they are not.

When we originally built Pulse for Meetings, the focus was on detecting emerging deepfake fraud in audio and video. What we have learned mirrors what we see in contact centers: AI-generated media is only a small percentage of overall impersonation activity.

Most confirmed cases surface through other patterns and correlated signals, not synthetic video manipulation alone. Detection systems, therefore, need to capture broad operational telemetry, not just deepfake artifacts.

For organizations evaluating their own remote hiring processes, understanding what signals are available during interviews is a practical starting point.