One meeting could cost you millions.

Deepfake candidates in virtual interviews

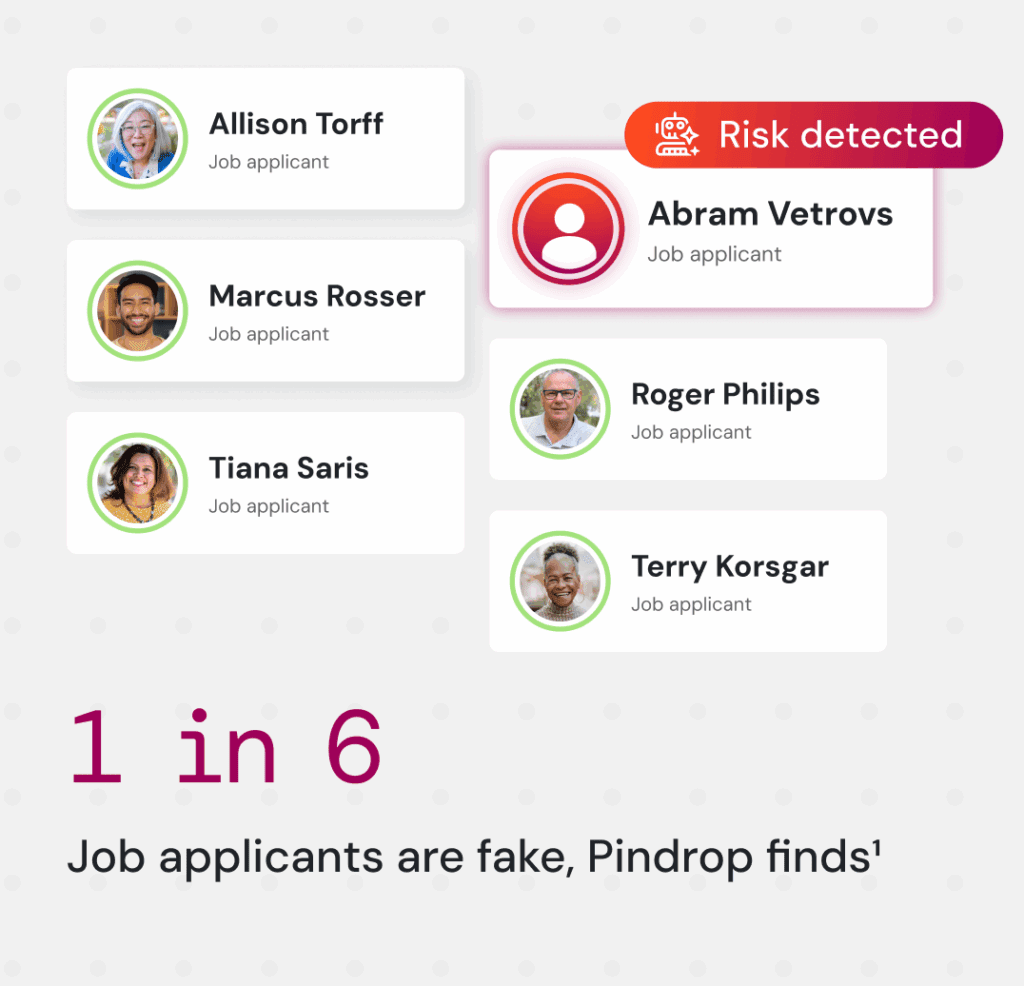

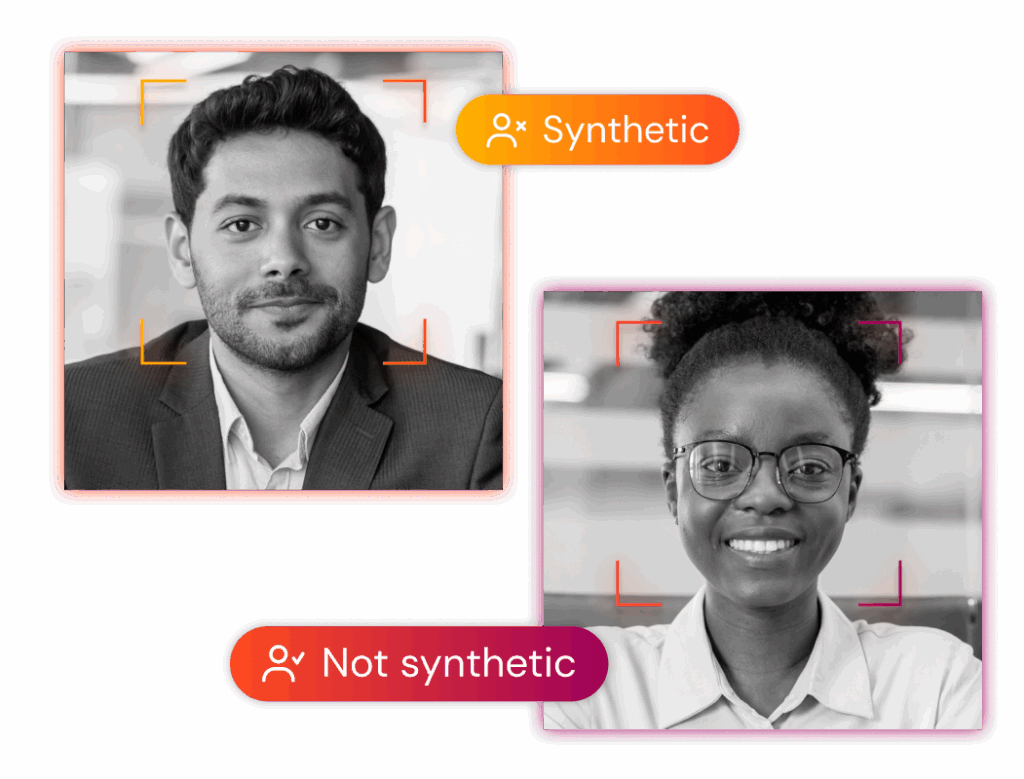

Recruiters are now facing a new wave of synthetic applicants — fake candidates using AI-generated faces, voices, or even live proxies to impersonate real professionals. In Pindrop’s own data, 1 in 6 applicants showed signs of fraud, and 1 in 4 DPRK-linked candidates used a deepfake during live interviews. Pulse verifies both the “real human” and “right human,” authenticating voices and detecting face swaps before a bad hire compromises your systems.

Fraudulent authorizations

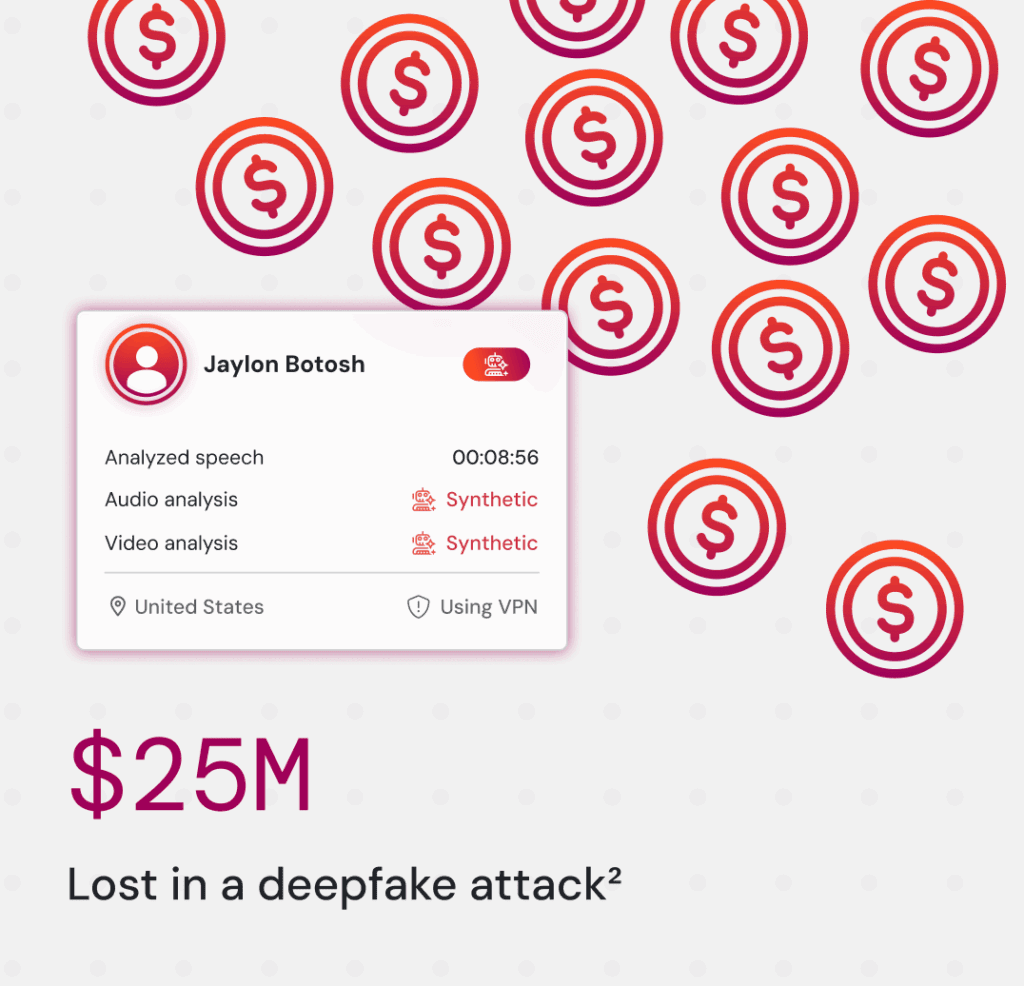

Deepfakes have already triggered multimillion-dollar corporate losses — like a finance worker who wired $25 million after attending a video meeting with deepfake versions of his CFO and team. Pulse instantly analyzes speech and video in real time to identify impersonation attempts, ensuring that every decision made in a call comes from the right person.

Fake employees and insider threats

Nation-state actors have begun using synthetic identities to infiltrate corporate meetings, seeking access to IP or internal strategy sessions. Pindrop’s research shows 1 in 343 fake applicants were linked to North Korea, with many using manipulated video or VPNs to mask their true location. Pulse flags these anomalies through continuous location intelligence, alerting teams when a participant’s signal doesn’t match their claimed geography.

From job interview to cyber breach: What Pindrop data reveals

Pindrop found 1 in 343 fake applicants had IP addresses linked to DPRK proxy infrastructure.

Ready to defend your meetings?

Deepfake detection in meetings FAQs

Pindrop® Pulse for meetings verifies whether the meeting participant is a “real human” and also whether they are the “right human” by analyzing speech and video in real time to detect synthetic voices, face swaps, impersonation, and other tactics.

Yes. In Pindrop’s data, 1 in 6 applicants showed signs of fraud3; Pulse for meetings authenticates voices and flags manipulated audio and video to help you prevent bad hires from accessing your systems.

Pulse for meetings instantly analyzes audio and video to identify deepfakes as well as impersonation attempts in live calls, reducing the risk of fraudsters impersonating executives and prompting wire transfers.

Pulse for meetings uses continuous location intelligence to spot VPN use or mismatches in geography and surfaces intelligence for meeting hosts to identify if a participant’s signal doesn’t match their claimed location.

Pulse for meetings integrates with Zoom, Webex, Teams and Google Meet (soon) to help determine if the people you see and hear are real; contact us to discuss your environment.

Yes. It verifies participants and detects deepfakes as the meeting happens, so decisions can be made with greater confidence.

1Based on analysis of applicants to Pindrop’s own fully remote roles, Why Your Hiring Process is Now a Cybersecurity Vulnerability, June 2025

2 CNN, “British engineering giant Arup revealed as $25 million deepfake scam victim,” May 2024

3 Based on analysis of applicants to Pindrop’s own fully remote roles, “Why Your Hiring Process is Now a Cybersecurity Vulnerability,” June 2025